When 90% of DAO members don't vote, Vitalik's solution is to assign each person an AI advisor.

Vitalik proposes using personal large language models combined with zero-knowledge proofs to address the issues of voter apathy and information asymmetry in DAO governance.

(Background: Vitalik emphasizes “neutrality belongs to the protocol, principles belong to people”—you don’t need to agree with me to freely use Ethereum.)

(Additional context: Vitalik calls for replacing ETH smart contracts with a new language within five years: creating a cyberpunk Ethereum that isn’t ugly.)

Table of Contents

Toggle

- Three Structural Flaws in DAO Governance

- Vitalik’s Solution: Personal LLM + Cryptography

- Privacy-Preserving Decentralized Governance

- AI as the Engine, Humans as the Steering Wheel

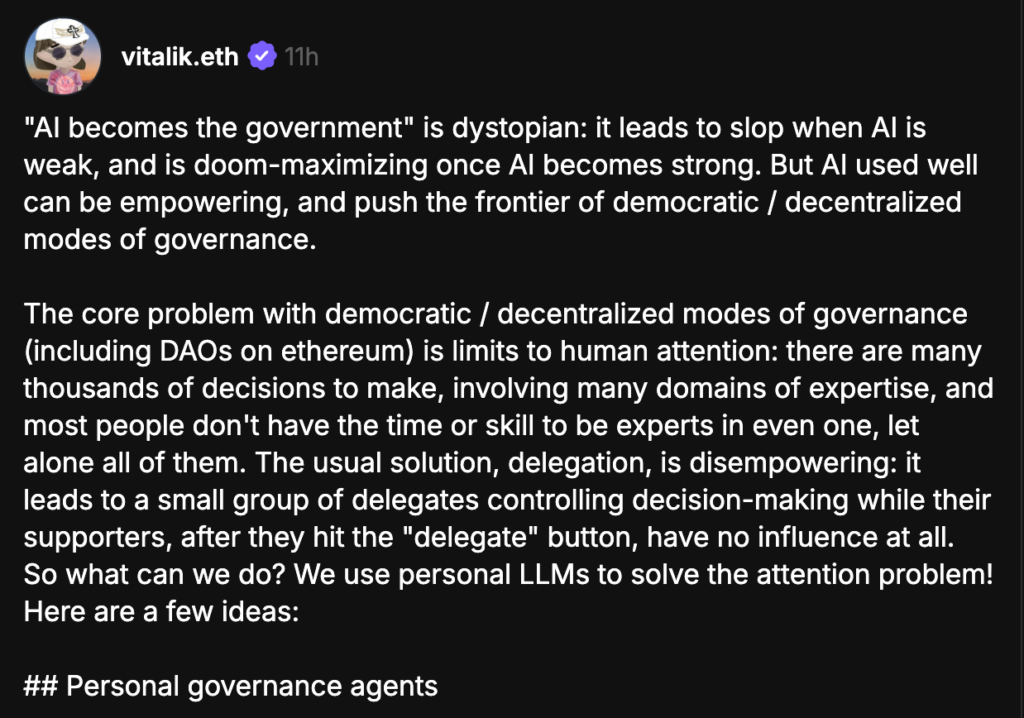

Last night, Vitalik posted about how combining cryptographic techniques (ZK, MPC) with LLMs can compensate for democratic governance flaws. He believes that instead of AI ruling humans, AI should serve as everyone’s digital secretary, filtering information and voicing your preferences:

“AI becoming the government” is dystopian: when AI is weak, it leads to governance collapse; when AI is strong, it maximizes destruction risk. But if used properly, AI can empower humans and push the boundaries of democratic/decentralized governance.

Three Structural Flaws in DAO Governance

We know that while the ideal of decentralized autonomous organizations (DAOs) is appealing, practical implementation faces many challenges.

First is voter apathy. The average voting turnout in major DAOs ranges from 17% to 25%, with some proposals involving fewer than 10% of token holders participating. This isn’t because token holders don’t care, but because a vibrant DAO might have hundreds of proposals annually, each involving complex technical decisions like smart contract upgrades, fund allocations, or parameter adjustments.

For an average token holder, reading and voting on each proposal individually is far too time-consuming relative to the value of their governance tokens.

Second is oligarchization. The top 10 voters in Compound control 57.86% of voting power; in Uniswap, it’s 44.72%. Token-weighted voting inherently favors capital concentration, and voter apathy exacerbates this skew.

Third is information asymmetry. Most token holders lack the time or expertise to evaluate proposals involving oracle design or liquidity pool parameters.

The result: rational indifference, minority dominance, and vulnerabilities exploited by governance attackers.

Vitalik’s Solution: Personal LLM + Cryptography

Vitalik’s proposed solution has three layers:

First layer: Personal governance agent. Each person runs their own agent, which can infer your personal preferences based on your writing, conversation history, or direct statements. In other words, it’s your private governance aide, helping you quickly review hundreds of proposals and tell you in three sentences which ones are worth your participation.

Second layer: AI-assisted citizen dialogue. Let the agent help summarize your views, convert them into shareable content, and facilitate structured discussions similar to pol.is and Community Notes, identifying consensus and reducing polarization.

Third layer: AI-integrated prediction markets. If a governance mechanism values high-quality input—be it proposals or arguments—you can create a prediction market: anyone can submit input, and AI can facilitate bets on tokens representing that input; if the mechanism “adopts” the input, it pays out a reward to the token holders.

Privacy-Preserving Decentralized Governance

Vitalik notes that one of the biggest weaknesses of highly decentralized/democratic governance is its poor performance when critical decisions depend on secret information. Common scenarios include:

- Participating in adversarial conflicts or negotiations

- Internal dispute resolution

- Compensation or fund allocation decisions

He proposes using zero-knowledge proofs (ZKP) to verify voting eligibility without revealing identities; trusted execution environments (TEE) to allow personal LLMs to participate in decision-making within secure enclaves; and multi-party secure computation (MPC) to handle confidential governance data.

In simple terms, this architecture aims not to replace human judgment with AI, but to enhance the quality of every human decision through AI.

AI as the Engine, Humans as the Steering Wheel

Vitalik’s analogy of “AI as the engine, humans as the steering wheel” is elegant, but the weight of the steering depends on who’s holding it. If 90% of token holders fully delegate control to their LLMs, and these LLMs happen to be trained on similar data and reasoning patterns, decentralized governance could ultimately become a homogeneous AI consensus—more efficient than human voting but more susceptible to systemic deception.

The feasibility of this vision hinges on a fundamental premise: how many people are willing to invest time in training and calibrating their AI agents to improve governance quality? If the answer is “as few as those who currently vote,” then AI-driven governance might just replace human whales with AI assistants for whales.

But at least, Vitalik raises the right question: the bottleneck in decentralized governance isn’t technology, but attention. If AI can help distribute attention rather than replace judgment, this direction is worth serious exploration.

Related Articles

An address is using 25x leverage to go long on ETH, with a position worth $28.44 million.

ShapeShift Founder Erik Voorhees Repurchases ETH at Lower Price After Year-Long Gap