2026 Outlook: When an 81,000-User Sample Meets the Economic Index — How AI Productivity Narratives and Job Anxiety Coexist

I. Research Background and Data Integration: From Traffic Evidence to 81,000 Subjective Narratives

Public discussions on generative AI have traditionally relied on two types of evidence: macro-level industry statistics and product-level usage and behavior logs. The former updates slowly and struggles to capture mechanisms at the job level, while the latter is authentic but often lacks the perspective of “how individuals interpret their own circumstances.”

Image source: Anthropic Official Report

In April 2026, Anthropic released “What 81,000 People Told Us About the Economics of AI.” The value of this report lies not in providing a “final answer,” but in connecting two key types of information:

- Platform-side observable data: which occupational tasks are using Claude and how usage intensity is changing.

- User-side subjective feedback: whether people feel more efficient and whether they are more concerned about being replaced.

Historically, discussions have either leaned macro (employment rates, industry growth) or focused on user experience (“I feel faster”). This report combines both, shifting the conversation from “opinion versus opinion” to a synthesis of “data plus perception.”

Three Key Findings: Exposure, Career Stage, Acceleration Anxiety

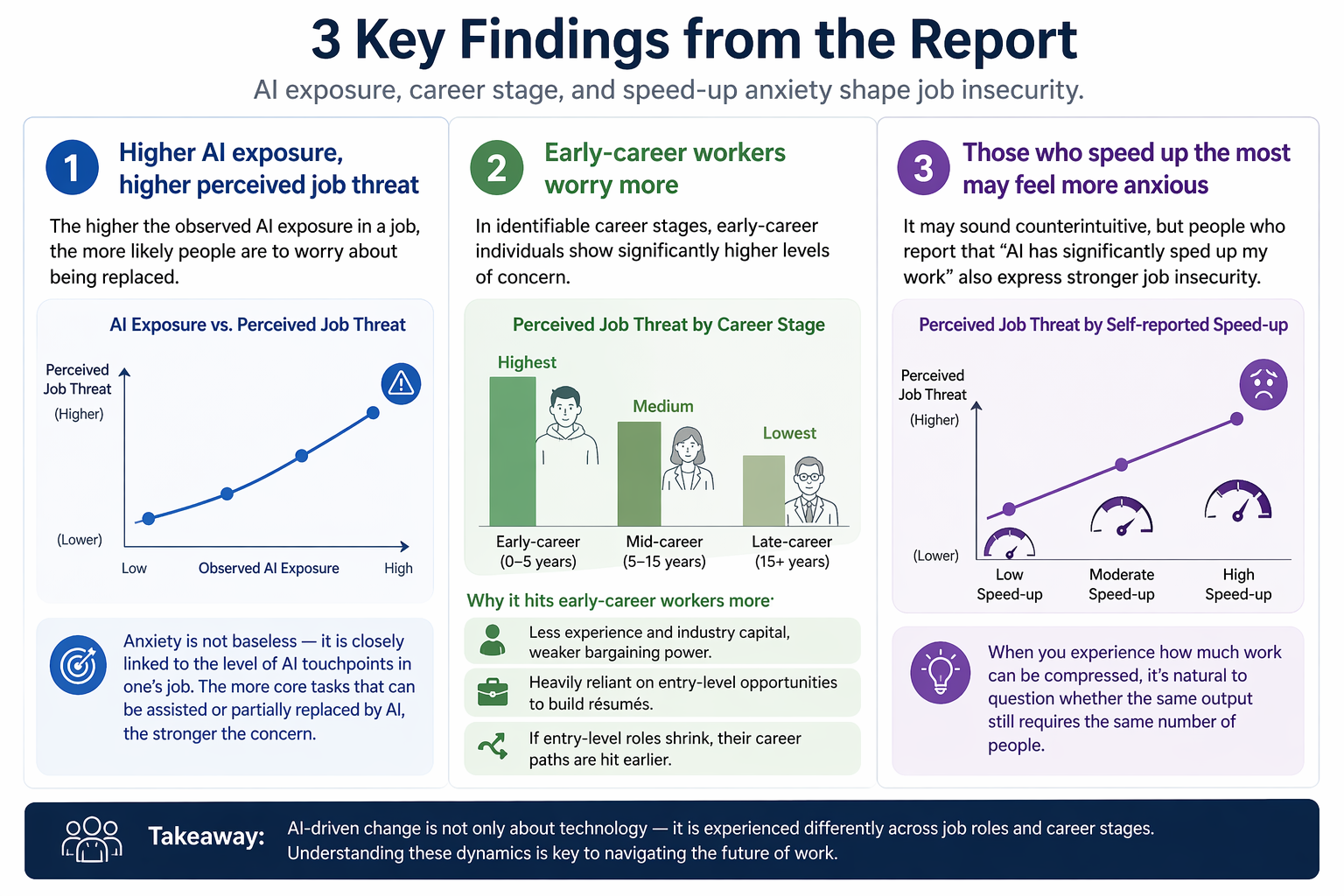

Finding 1: Higher AI Exposure Increases Perceived Job Threat

The report identifies a clear relationship: the greater the observed exposure to AI in a profession, the more likely respondents are to express concerns that their jobs could be replaced.

This suggests that many people’s anxieties are not groundless but are linked to the technological reach of their roles. If a position already has several core tasks that AI can assist with or partially replace, those in that role are more likely to worry about future changes—reflecting rational risk awareness.

Finding 2: Early-Career Workers Are More Concerned

The report notes that among samples with identifiable career stages, early-career workers show more pronounced concerns.

This aligns with 2026 labor market observations, such as increased pressure on youth employment.

Why is this more common among early-career groups?

- Less experience and fewer industry resources, resulting in weaker bargaining power.

- Greater reliance on entry-level opportunities to build résumés.

- If entry-level roles contract, career paths are disrupted earlier.

Finding 3: Those Experiencing the Most Acceleration May Also Feel More Anxious

Though counterintuitive, this is significant:

Some who report that “AI has significantly increased my speed” also express stronger job insecurity.

The underlying logic is straightforward:

When you see firsthand that work efficiency can be dramatically increased, you become more aware of whether the same output still requires the same number of people.

Where Does Productivity Really Show: Scope Expansion vs. Speed

Many assume AI’s value is simply “faster.” However, this report highlights another, potentially more important dimension: “scope expansion.”

- Scope expansion: tasks that were previously unmanageable are now possible.

- Speed: tasks that were already feasible are now completed faster.

Scope expansion is a frequent theme in the report.

This means AI is not just an efficiency tool—it’s a force multiplier for capabilities.

Different Implications for Different Groups

- For high-skill roles: it can mean tackling complex tasks more deeply and systematically.

- For some low-wage roles: it may enable side hustles, cross-skill exploration, and greater independent delivery.

- For organizational management: job boundaries may be redrawn, and division of labor may shift more rapidly.

Why “More Efficient” Does Not Mean “More Secure”

This is one of the most overlooked points in current debates.

Many reports emphasize:

“Employee efficiency has increased, so technology is inclusive.”

But in reality, efficiency gains answer only “how much output has changed,” not “how returns are distributed.”

The Same Efficiency Dividend Can Go Four Different Ways

- To employees: higher income, less repetitive work, more autonomy.

- To companies: higher output with the same headcount, or lower costs for the same output.

- To customers: lower prices, faster delivery.

- Absorbed by the system: metrics improve, but frontline experience becomes “more tasks, faster pace.”

The report also notes respondents saying:

After using AI, supervisors and clients expect “more, faster.”

This explains why many people report being “more efficient” yet “more anxious” at the same time.

Integrating the Latest Public Information: What We Can and Cannot Confirm

Drawing on Anthropic’s Economic Index materials from 2026 (including the January and March reports and survey framework), the most reliable conclusions at present are:

What We Can Reasonably Confirm

- AI has achieved real penetration in some occupational tasks, moving beyond the conceptual stage.

- Subjective job concerns are directionally aligned with task exposure.

- Productivity gains are real, reflected not only in “speed” but also in “scope.”

What Cannot Be Over-Extrapolated

- This sample cannot be used to infer the net national employment impact.

- Individual account user experiences do not equate to the experiences of all company employees.

- “Increased concern” does not directly equal “unemployment.”

Why Caution Is Necessary

This survey used open-ended responses and model classification, not a strictly structured sampling questionnaire.

It is highly valuable as a reference but is better suited for “identifying trends and hypotheses” than as a “definitive conclusion.”

Actionable Recommendations for Enterprises, Individuals, and Policymakers

To move beyond discussion, conclusions should be translated into action items.

For Enterprises: Upgrade “Efficiency KPIs” to “Dual Metrics”

Track both categories of metrics:

- Output metrics: time spent, delivery volume, error rate, rework rate.

- Sustainable people-effectiveness metrics: perceived workload, turnover risk, training coverage, role transition success rate.

Avoid doing only one thing:

Don’t just implement tools without adjusting job design and training mechanisms.

Otherwise, short-term efficiency may rise, but long-term organizational stability may suffer.

For Individuals: Upgrade AI Use to “Career Asset Building”

Prioritize three directions:

- Use AI for reusable methods, not just one-off speed boosts.

- Strengthen skills in problem definition, cross-team collaboration, and accountability for results.

- Build a track record of verifiable achievements (projects, cases, industry insights) to enhance irreplaceability.

For Policymakers and Institutions: Focus on Early-Career Buffer Zones

If early-career groups are more sensitive, public support should be more proactive:

- Career transition and retraining vouchers.

- AI co-training programs for entry-level roles.

- More granular data releases on job transitions.

Conclusion

This study, based on 81,000 samples, demonstrates that AI’s economic impact encompasses at least two dimensions that must be evaluated in parallel: task-level efficiency gains and changes in workers’ job expectations and return distribution. Focusing solely on the former risks overestimating inclusiveness; defining risk only by the latter underestimates the real gains from expanded capability boundaries.

A robust analytical framework should recognize that productivity improvement and employment uncertainty can coexist, with significant heterogeneity across job exposure, career stages, and organizational management. As a result, the focus of future discussions should shift from “whether to adopt AI” to “how to optimize distribution mechanisms, mitigate transition costs, and ensure sustainable career mobility while increasing output.”

After 2026, the core of AI economic research and governance is not to seek a single conclusion, but to build a comprehensive evaluation system that can simultaneously track efficiency, distribution, and job stability.

Related Articles

Arweave: Capturing Market Opportunity with AO Computer

The Upcoming AO Token: Potentially the Ultimate Solution for On-Chain AI Agents

AI+Crypto Landscape Explained: 7 Major Tracks & Over 60+ Projects

What is AIXBT by Virtuals? All You Need to Know About AIXBT

Understanding Sentient AGI: The Community-built Open AGI