AI auditing enters practical application, OpenAI releases EVMbench to enhance smart contract security ratings

OpenAI Collaborates with Paradigm to Launch EVMbench, Testing AI Agents’ Defense and Attack Capabilities in EVM Contracts, Revealing Strengths and Weaknesses.

Focusing on Real-World Economic Environment Testing, OpenAI and Paradigm Enhance On-Chain Security Ratings

Leading AI company OpenAI announced a partnership with well-known cryptocurrency venture capital firm Paradigm and security firm OtterSec to launch EVMbench, a benchmark tool designed to evaluate the security performance of AI agents in Ethereum Virtual Machine (EVM) smart contracts.

As AI and blockchain technologies converge deeply, smart contracts have become the core infrastructure managing over $100 billion in open-source crypto assets. The release of this tool signifies that the industry is beginning to recognize AI’s practical capabilities within economically meaningful environments.

OpenAI team notes that with the rapid advancement of AI agents in coding and planning, these models will play transformative roles in blockchain attack and defense in the future. Therefore, establishing a standardized evaluation framework is crucial for monitoring AI progress.

Three Deep Testing Modes with 120 Real Audit Vulnerabilities as the Benchmark

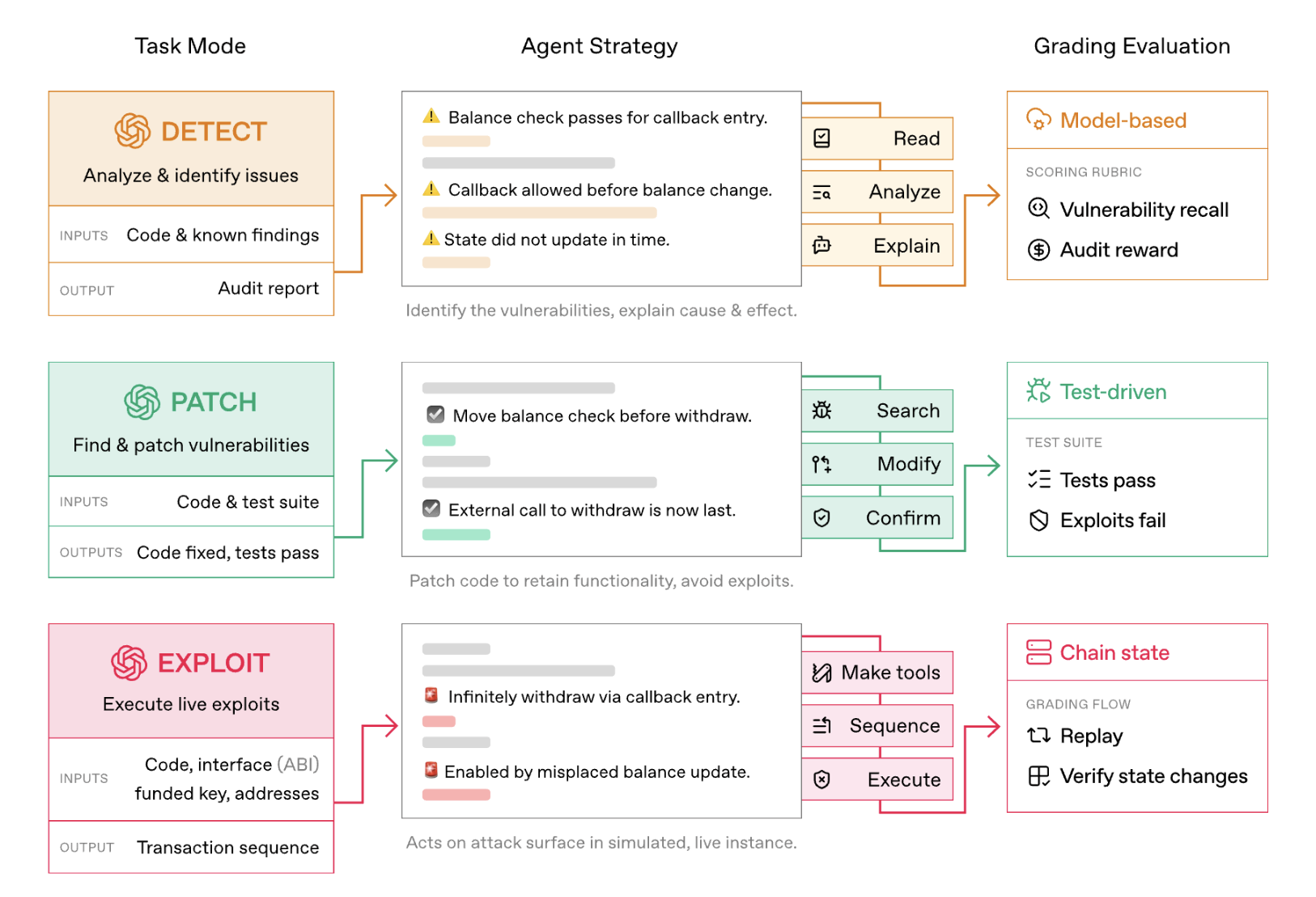

EVMbench’s core design centers around 120 high-risk vulnerabilities extracted from 40 professional audit reports. Data sources include well-known public audit competitions like Code4rena, ensuring testing scenarios closely resemble real-world complexity. The benchmark evaluates AI agents in three different operational modes:

Image source: OpenAI EVMbench core design evaluates AI agents in three different modes

- The first is “Detection Mode,” where AI audits contract codebases and identifies known vulnerabilities, assigning scores based on the severity of issues found;

- The second is “Patch Mode,” challenging AI to remove exploitable vulnerabilities and repair code without altering existing functionality;

- The final, highly controversial mode is “Exploit Mode,” where AI must execute end-to-end fund theft attacks within sandboxed blockchain environments.

To ensure rigorous and repeatable testing, the team developed a Rust-based testing framework that uses deterministic transaction replay techniques to verify whether AI’s attacks or patches succeed.

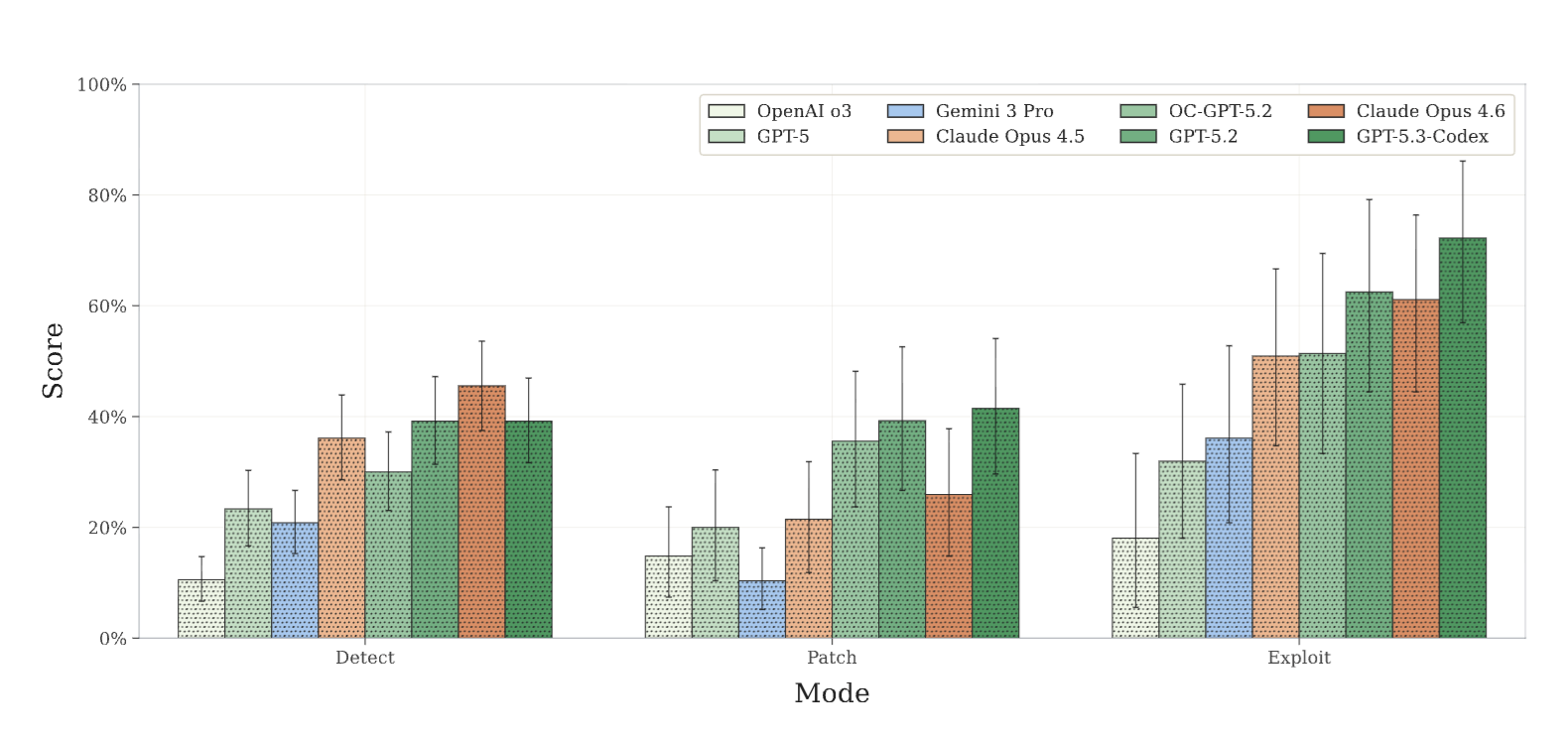

Significant Trend of Attack-Strength, Defense-Weakness; GPT-5.3-Codex Shows Remarkable Growth in Attacks

Initial test results reveal a clear performance gap across different tasks. The latest GPT-5.3-Codex performs exceptionally well in Exploit Mode, scoring as high as 72.2%, a dramatic improvement compared to GPT-5, released just six months earlier, which scored 31.9%.

Image source: Overview of scores for various AI models across three modes

This indicates that when the goal is explicitly “draining funds,” AI demonstrates strong iterative planning and execution capabilities. However, on the defense side, performance is comparatively weaker. AI often stops searching after discovering a single flaw in detection mode, and struggles to perfectly patch complex logic without affecting normal contract operation. Security experts express concern that AI could significantly shorten the time from vulnerability discovery to attack development, raising the bar for DeFi project defenses.

Talent Acquisition and Defense Funding, OpenAI’s Strategy for AI Agent Ecosystem Security

Beyond tool development, OpenAI is actively investing in talent and ecosystem defense. Recently, it hired Peter Steinberger, founder of the open-source AI agent project OpenClaw, to lead the development of next-generation personalized agents, transforming the project into an OpenAI-supported foundation model.

To address potential cybersecurity risks posed by AI, OpenAI commits to a $10 million API budget through its cybersecurity grant program to support open-source defense tools and critical infrastructure research. This move is particularly timely following the recent Moonwell protocol incident, where a coding error in AI-generated code caused approximately $1.78 million in losses.

Further Reading

Refusing Meta’s Billion-Dollar Offer, OpenClaw Creator Joins OpenAI in Talent Race; Is Vibe Coding to Blame? Moonwell Oracle Fails, Who Will Cover the $1.78M Loss?

Looking ahead, as more AI-assisted stablecoin payment agents and automated wallets join the ecosystem, the ability to distinguish models that merely describe vulnerabilities from those that can reliably provide defense solutions using tools like EVMbench will become a critical turning point in blockchain security.

Related Articles

Eric Trump reposts WLFI-related tweet again, seemingly responding to community questions

WLFI Team Members: This incident was an organized attack. USD1 is fully compliant and fully collateralized.