From the Spring Festival Gala robots to the computing power energy battle: Why does China hold the "trump card" in the AI era?

By the Spring Festival of 2026, while the world is still marveling at OpenAI’s latest model parameters, China showcased another side of AI through a Spring Festival Gala—embodied intelligence in physical implementation.

Opening the program list of the 2026 CCTV Spring Festival Gala, what we see is an unprecedented “AI Parade.” This is no longer the simple mechanical dance performances from a few years ago but a concentrated explosion of China’s robotics industry—“multiple companies, multiple models, all scenarios.”

- Magic Atom’s full-stack cluster turned robots into the best “atmosphere team,” dancing alongside Chen Xiaochun and Yi Yangqianxi in “Creating the Future,” with movements so coordinated that it’s hard to tell real from fake.

- Unitree’s G1 and H2 robots demonstrated astonishing motion control capabilities in “Wu BOT”—non-real-time remote control, relying solely on edge computing for autonomous balancing. When H2, dressed in a red robe, dances with a sword, it proves that China’s robot movement “little brain” has matured.

- Songyan Power in the skit “Grandma’s Favorite” had robots perform comedic roles—throwing punchlines and catching jokes—successfully crossing from “props” to “actors.”

- Galaxy General’s Galbot G1 performed “picking walnuts” in a microfilm, a seemingly simple action that showcases peak tactile feedback and dexterous hand technology.

This Spring Festival Gala sends a clear signal: China’s AI is no longer confined to servers; it has grown limbs and stepped into reality.

However, just as we cheer for the robots, Wall Street across the ocean falls into a silent panic. They realize that the “blood” powering these AIs—electricity—is running out. When shifting our gaze from the Gala stage to Silicon Valley data centers, we see the elephant in the room—power.

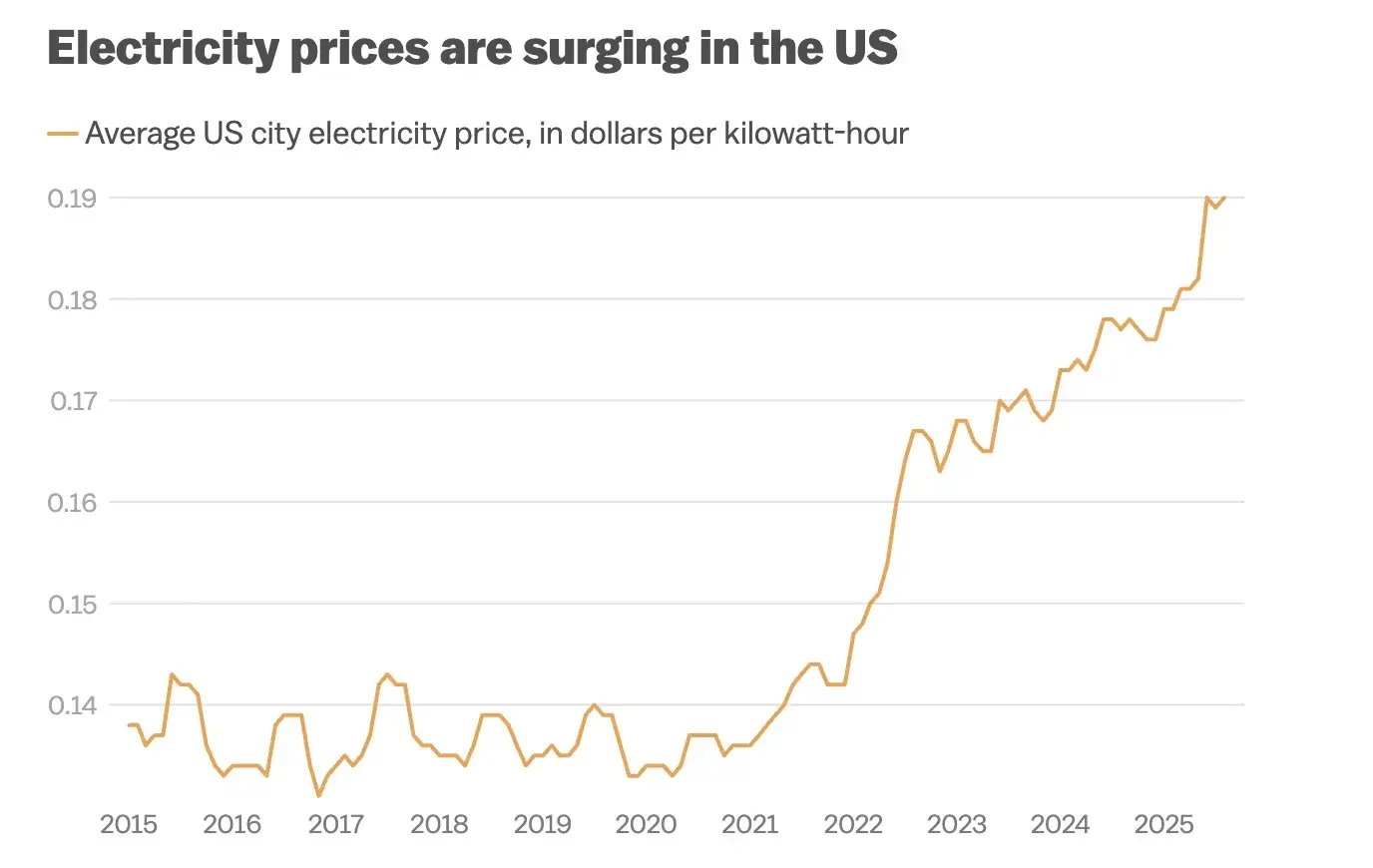

As of early 2026, U.S. residential electricity prices have surged 36%, reaching $0.18 per kWh. But this is only superficial; the core crisis lies in supply-side collapse. Training a GPT-4 level model consumes as much electricity as the total annual power used by 100,000 households. It is estimated that by 2028, the annual electricity consumption of U.S. data centers will skyrocket to 600,000 GWh.

The U.S. power grid faces a dual blow of “heart disease” and “vascular embolism,” with 5% of electricity relying on aging fossil fuels and nuclear power, which are now facing decommissioning waves. The grid is split into three isolated islands—East, West, and Texas—with poor interconnection. Approving a cross-state transmission line often takes 15 years, preventing wind power from the Midwest from reaching data centers on the East Coast.

As Sam Altman said, “Energy is money.” Today, Silicon Valley’s CEOs are no longer worried about chip quotas but about—where is enough electricity to run these chips?

If computing power is the engine of AI, then electricity is its fuel. In this energy game, China, with a decade of advanced planning, has built a strategic moat that the U.S. finds difficult to replicate. If computing power is the engine of AI, then electricity is its fuel. In this energy game, China, with a decade of advanced planning, has built a strategic moat that the U.S. finds difficult to replicate.

By 2025, China had completed 45 ultra-high-voltage projects, with the total length of UHV DC transmission lines surpassing 40,000 kilometers. This “electric highway” can deliver abundant clean energy from the West to data centers in the East at millisecond speeds or directly support the “East Data West Computation” hub. China owns 35 of the world’s 37 largest high-voltage direct current cable systems, creating an infrastructure gap that the U.S. cannot cross in the short term.

AI’s high energy consumption inherently requires clean energy. In 2025, China’s renewable energy installed capacity surpassed 60% for the first time, with wind and photovoltaic additions exceeding 430 million kilowatts. Nearly 40% of electricity used by society comes from green energy. Compared to the U.S., which is still struggling with nuclear plant delays, China has achieved grid parity for solar and wind power, providing cheap and green energy solutions for high-energy-consuming AI data centers.

China is the world’s transformer manufacturing hub, with over 60% of global capacity. The biggest pain point in U.S. grid upgrades is transformer shortages, with delivery times now 3-4 years. Whether through Mexico transshipment or direct procurement, the U.S. power grid’s maintenance heavily depends on Chinese manufacturing. When U.S. data centers halt operations due to transformer shortages, Chinese electrical equipment companies are operating at full capacity, supporting rapid expansion of domestic computing infrastructure.

The 2026 Spring Festival Gala is not just a celebration of robots; it is a snapshot of China’s industrial strength.

When we see Unitree’s robotic dog rolling and Galaxy General’s robots working on stage, remember: behind every agile move are not only advanced algorithms but also stable electricity transmitted over thousands of kilometers via ultra-high-voltage lines and a robust power grid supporting it.

In this second half of the AI revolution, the marginal cost of increasing computing power will no longer depend on chip nanometers but on the cost of joules. The U.S. has the most advanced algorithms, but China has the most powerful energy conversion and transmission system.

For investors, the logic is clear: in this gold rush, if Nvidia is selling shovels, then China’s infrastructure builders—UHV, power equipment, green energy—hold the real water source.