Meta smart glasses exposed: Users' showering, sexual activity, credit card numbers... private images all sent to Kenya to train AI

Swedish media investigation reveals that private images from Meta Ray-Ban smart glasses—ranging from bathroom scenes to nudity—are being transmitted to screens of outsourced reviewers in Kenya, while store clerks tell consumers “data stays locally.”

(Background: Zuckerberg predicts smartphones will be phased out! AI smart glasses + holographic projection are the future of humanity)

(Additional context: Former Meta executives expose Zuckerberg’s pro-China accusations: secret creation of “Facebook Hong Kong and Taiwan speech review” tools, selling Facebook user privacy)

Table of Contents

Toggle

- Malfunctioning masks, voiceless clerks

- The triple identity of a pair of glasses

- Civil resistance within Bluetooth signals

- Structural collapse of consent mechanisms

According to a joint investigation by Sweden’s Dagens Nyheter and Göteborgs-Posten: Meta’s smart glasses users are sending your private life to Kenya!

The investigation uncovered the following facts: When users activate the AI features of Meta Ray-Ban smart glasses—whether asking it to recognize objects, translate menus, or answer questions—the images and voice data captured by the lenses are sent to Meta’s servers in Rüleå, Sweden, and Denmark.

Then, this data is assigned to thousands of outsourced workers employed by Meta’s contractor Sama in Nairobi, Kenya, who review, annotate, and categorize it to train AI models.

A Kenyan reviewer told investigators:

“We see everything—from living rooms to nudity. These are real people just like us.”

They see content including: bathroom scenes, sexual acts, accidentally captured credit card numbers, private conversations, and recordings of users watching adult content.

Malfunctioning masks, voiceless clerks

Meta’s system design theoretically includes a safety feature: an automatic masking algorithm that blurs faces and sensitive information in images. But the Swedish investigation found this system often fails. Reviewers can clearly see everyday scenes from ordinary homes, unaware that their glasses are recording, or that what they record might appear on someone’s screen on the other side of the world.

Even more ironic is the information gap on the consumer side. The reporters visited ten chain stores in Stockholm and Gothenburg, mainly Synsam and Synoptik. When asked about privacy, many sales staff claimed users have full control, and all data stays within the phone app, not transmitted elsewhere.

This is completely contrary to reality.

Using the AI features of Ray-Ban Meta smart glasses requires data to be processed through Meta’s infrastructure; there is no purely local operation option. Voice recordings are stored in the cloud for up to a year to “improve AI systems,” with no opt-out mechanism besides manual deletion. Several store staff admitted they don’t even know what data the glasses are transmitting.

Swedish Data Protection Authority (IMY) cybersecurity expert Petter Flink warned: this marketing approach conceals the real privacy risks. Consumers have no idea about the backend processing. Meta’s response is a standard corporate line: the company complies with user agreements and GDPR, and the location of reviewers “does not affect compliance as long as rules are followed.”

The triple identity of a pair of glasses

Swedish investigation reveals the first layer of Meta smart glasses’ privacy issues: you think your data is private, but strangers are reviewing it. But this is just the tip of the iceberg.

The second layer has already emerged across the Atlantic. U.S. Immigration and Customs Enforcement (ICE) agents have been spotted wearing Meta Ray-Ban glasses during law enforcement operations, taking photos of suspected undocumented immigrants in public, then cross-referencing with databases and social media platforms. The Verge cited insiders saying this is not an isolated case but a spreading pattern.

A consumer-grade wearable device, without court orders or search warrants, has become a tool for state surveillance.

The third layer is the most structurally threatening. In mid-February, The New York Times obtained internal Meta documents revealing that Reality Labs is developing a real-time facial recognition feature codenamed “Name Tag”: when wearers look at anyone, AI can cross-reference Meta platform data to instantly display the person’s name, personal info, and mutual friends. An internal strategy document ominously states: “We will launch in a dynamic political environment, where many civil society groups will focus resources elsewhere.”

In other words: Meta knows this feature will provoke backlash, so they plan to deploy it when opponents are least prepared.

These three layers mean that a $299 pair of sunglasses simultaneously acts as: a silent data collector for AI training, a covert tool for law enforcement, and a real-time identity recognition social weapon. Everyone around the wearer—those being recorded, identified, or scrutinized—never gave their “consent.”

Civil resistance within Bluetooth signals

Systemic responses have been slow and frustrating. The Electronic Privacy Information Center (EPIC) in the U.S. has written to the Federal Trade Commission (FTC) demanding an investigation, but in today’s political climate, regulatory enforcement is uncertain. Europe’s GDPR exists, but Meta’s standard response is “we are compliant,” and the gap between actual data flows and compliance commitments was exposed by Swedish reporters visiting ten stores.

The first substantial response came from Swiss sociologist Yves Jeanrenaud. An independent developer, he released “Nearby Glasses” at the end of February: an Android app that scans Bluetooth Low Energy (BLE) broadcast identifiers to detect Meta or Snap smart glasses within 10-15 meters and issue alerts.

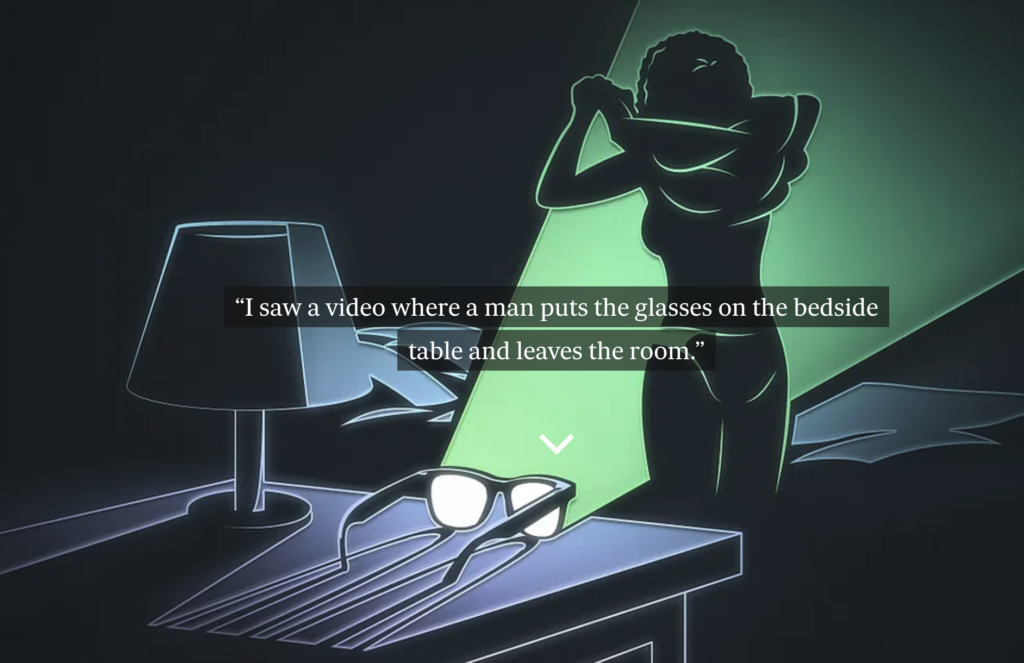

Jeanrenaud said his motivation was “seeing the scale of abuse and inhumanity involved in these smart glasses.” Cases include: someone secretly filming customers at a beauty salon, recording in courtrooms and clinics, or with lenses open in public restrooms… each with real examples, not just hypothetical.

Structural collapse of consent mechanisms

Returning to the core issue: Meta’s smart glasses have become a privacy crisis because they undermine the fundamental premise of modern privacy frameworks: the “notice and consent” model.

Traditionally, companies must inform you and obtain your consent before collecting your data. But Ray-Ban Meta’s operation simultaneously collapses this logic in three ways. For the wearer, store clerks claim data stays locally, but in reality, it’s flying to Denmark and Kenya.

For the photographed, you are not a Meta user, have not clicked any consent buttons, and don’t even know you’re being recorded.

For Kenyan reviewers, they are forced to watch strangers’ most private moments, with the psychological toll never reflected in Meta’s ESG reports.

The strength of privacy protection has never depended solely on legal texts but on whether companies believe you will pursue consequences. Meta apparently has calculated: you won’t…