Colombian Court Rejects Appeal for AI Writing, Then Gets Flagged By Its Own AI Detector

In brief

- Colombia’s Supreme Court rejected a cassation appeal after AI detectors flagged it as machine-generated.

- Lawyers ran the ruling through the same tools and found it also appeared AI-written.

- Experts and studies showed AI-detection software produced unreliable and inconsistent results.

The Supreme Court of Colombia denied a cassation appeal, arguing that it was generated by AI. But the same tool the court used to determine the appeal’s purported AI origins said that its own ruling also received generative help. Is it a double standard by the court, or faulty tools at play? “Faced with a well-founded suspicion that the brief submitted by the attorney had not been drafted by the legal professional himself, the court submitted the text to the Winston AI tool,” the court argued. “Its analysis indicated that the document contained only 7% human content, evidencing a marked influence of automated writing and leading to the conclusion that it had been produced using artificial intelligence.”

After running the analysis with other tools that provided similar results, the court ruled that “since the filing cannot be regarded as a duly submitted pleading, its dismissal as inadmissible is required.” But when the court’s ruling faced similar scrutiny from legal experts, it showed similar results. “I submitted the text of Auto AP760/2026 from the Supreme Court to the same Winston AI software cited in the ruling,” attorney Emmanuel Alessio Velasquez wrote on X on Tuesday. “The result: The document contains 93% AI-generated text.”

Sometí el texto del auto AP760/2026 de la @CorteSupremaJ al mismo software Winston IA citado en la providencia. El resultado fue que el documento presenta un 93% de “texto generado con IA”. https://t.co/xTm2jI4d70 pic.twitter.com/lpSHuRjEZ4

— Emmanuel Alessio Velásquez (@EmmanuVeZe) March 3, 2026

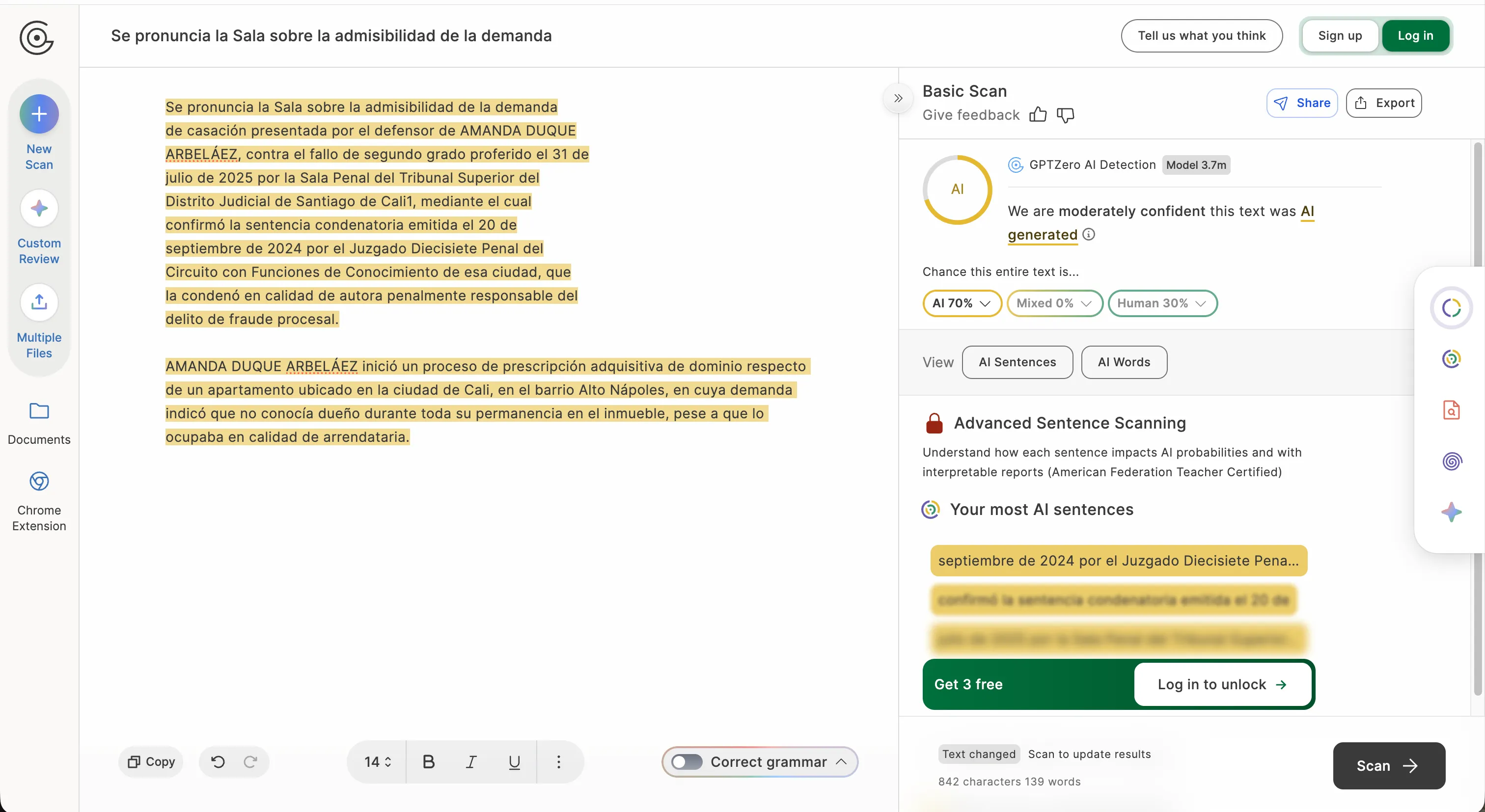

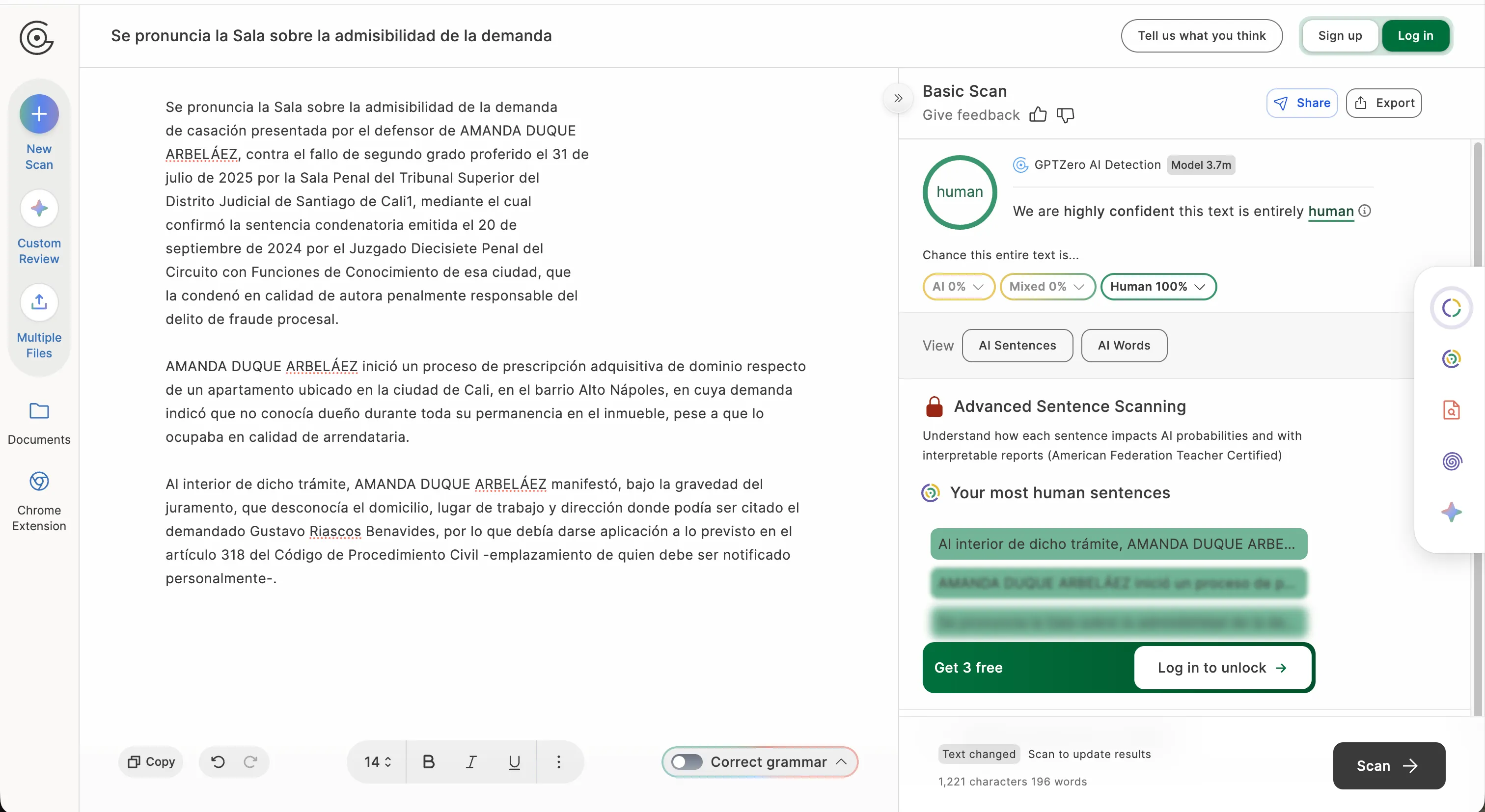

“If the very ruling that condemns the use of artificial intelligence scores that percentage, the methodological fragility of using these detectors as argumentative support becomes self-evident,” he argued in a subsequent tweet. Within hours of the court posting a thread about the decision on X, lawyers began running their own tests. Velasquez’s post went viral in legal circles, accumulating tens of thousands of views. We ran the test on the court’s verdict, as well, and things initially didn’t look great. When GPTZero scanned only the opening words of the court text, it returned a 100% AI result.

When the same tool processed a longer version including the factual background section, it reversed course entirely: 100% human. The tool is simply not reliable enough to be trusted in court or in situations that would require a high degree of certainty.

Colombian attorneys reacted quickly with their own experiments. Criminal defense lawyer and lecturer Andres F. Arango G, submitted a court filing from 2019, years before the large language models these tools were trained to detect even existed, and it came back claiming 95% AI generation. “These tools then invite you to ‘humanize’ the article through their paid services,” he wrote on X, noting an obvious commercial incentive baked into the detection business model. Nicolas Buelvas ran his 2020 undergraduate thesis on the principle of trust in criminal law. The result? 100% AI. Dario Cabrera Montealegre, another Colombian attorney, pointed out the hypocrisy of relying on technology to try to combat it. “The court is using AI to determine if there was AI,” he said. “Something contradictory from my practical point of view.”

La Corte usa IA para determinar si hubo IA…!? Algo contradictorio desde mi punto de vista práctico…Si se rechaza debe ser porque como humanos lo detectamos

— Darío Cabrera Montealegre (@dalcamont_daro) March 2, 2026

Beyond legal circles, further tech-savvy individuals pointed out the dangers of excessive reliance on AI flagging tools. “To date, there is no publicly accessible tool that can accurately define the percentage of AI use when drafting a text,” Carlos Alejandro Torres Pinedo argued. “What is worse: No one can publicly verify the source code behind these detection platforms. How can they be used to delegitimize someone’s right of access to justice?”

The technical reasons for these failures are well-documented. AI detectors measure statistical patterns: sentence length, vocabulary predictability, and a quality that researchers call “burstiness,” which refers to the natural rhythm variation humans introduce in their writing. The problem is that formal legal prose, academic writing, and texts produced by people who write in a second language share many of those same statistical signatures. Studies on AI detection A 2023 study published in Patterns found that more than 61% of Test of English as a Foreign Language (TOEFL) essays by non-native English speakers were incorrectly flagged as AI-generated. A systematic review by Weber-Wulff that same year concluded no available tool is either precise or reliable. Turnitin acknowledged in June 2023 that its own detector produced higher false positive rates when the AI content level in a document fell below 20%. Even OpenAI had to take down its own AI detection tool following constant inaccuracies and an inability to do its actual job. Universities have been grappling with this for years. Vanderbilt disabled Turnitin’s AI detector in 2023 after estimating it would generate around 3,000 false positives annually. The University of Arizona dropped AI-detection features from its plagiarism software after a student lost 20% of a grade on a false positive. A 2024 case at UC Davis saw 17 linguistics students flagged, 15 of them non-native English speakers. The pattern is consistent. The tools penalize the people who write most formally, most repetitively, or most carefully, exactly the profile that lawyers, academics, and second-language speakers fit.

The cultural fallout has bordered on absurdity. Across writing and journalism circles, people have started avoiding em dashes in their work, not because of any style guide, but because AI language models use them frequently and detection tools (and people) have taken notice. Writers are self-editing natural punctuation out of fear of algorithmic suspicion. Beyond the written world, artists have suffered the wrath of moderators and colleagues for making art pieces that look AI

We are in the world which real artists is being punished because he’s the victim of these thief called AI artists? #Savehumanartist #noAIart #NoAI #SavefutureArt pic.twitter.com/yTQAeyc8SR

— Benmoran artist (@benmoran_artist) December 27, 2022

Colombia’s two rulings—AC739-2026, in which the Civil Chamber fined a lawyer for citing 10 nonexistent AI-generated precedents in February, and AP760-2026—are emerging as some of the region’s first judicial decisions directly confronting the misuse of generative AI in legal filings. Colombia’s judicial branch adopted formal guidelines in December 2024 that regulate how judges and court staff can use artificial intelligence. The rules allow AI to be used freely for administrative and support tasks, such as drafting emails, organizing agendas, translating documents, or summarizing texts, while permitting more sensitive uses, like legal research or drafting procedural documents, only with careful human review. The guidelines explicitly prohibit relying on AI to evaluate evidence, interpret the law, or make judicial decisions, emphasizing that human judges remain fully responsible for all rulings and must disclose when AI tools were used in preparing judicial materials. These guidelines, compiled in the “PCSJA24-12243” agreement, could be used to contest such a decision.

The Supreme Court has not yet issued any additional statement in response to the backlash over its choice of detection tools. The ruling didn’t have em dashes, either.