Elon Musk highly praises Seedance 2.0, saying "AI video development is advancing too quickly"! ByteDance believes the model is not yet perfect enough

ByteDance’s Video Model Seedance 2.0 Explodes Overseas, Elon Musk Praises “Development Speed Too Fast”

The model has fully integrated with Doubao and Jiumeng, and open trial access is available for enterprise users. On February 14, a series of major upgrades, including Doubao Large Model 2.0, will be released.

(Background: TikTok’s parent company ByteDance invests $23 billion! Planning to secure AI dominance by 2026)

(Additional context: OpenAI’s video generation tool Sora officially launched! Top 5 features and subscription plans overview)

Table of Contents

- Elon Musk retweets, boosting international attention

- From internal testing to full integration: Doubao, Jiumeng, and Volcano Ark advancing simultaneously

- Multimodal, long narrative, and synchronized audio-visuals targeting “professional production scenarios”

- “Still far from perfect”: clear limitations and shortcomings noted in product description

- February 14 release imminent, upgrade pace becomes a new variable

ByteDance’s video model Seedance 2.0 has gone viral overseas, with Elon Musk praising “development speed too fast.” The model has fully integrated with Doubao and Jiumeng, and open enterprise trial access is now available. Its multimodal input and multi-camera long narrative capabilities directly target professional production scenarios. ByteDance admits that while the product is leading, it is still far from perfect, and will continue exploring deep alignment between large models and human feedback. The Doubao Large Model 2.0 will be released on February 14.

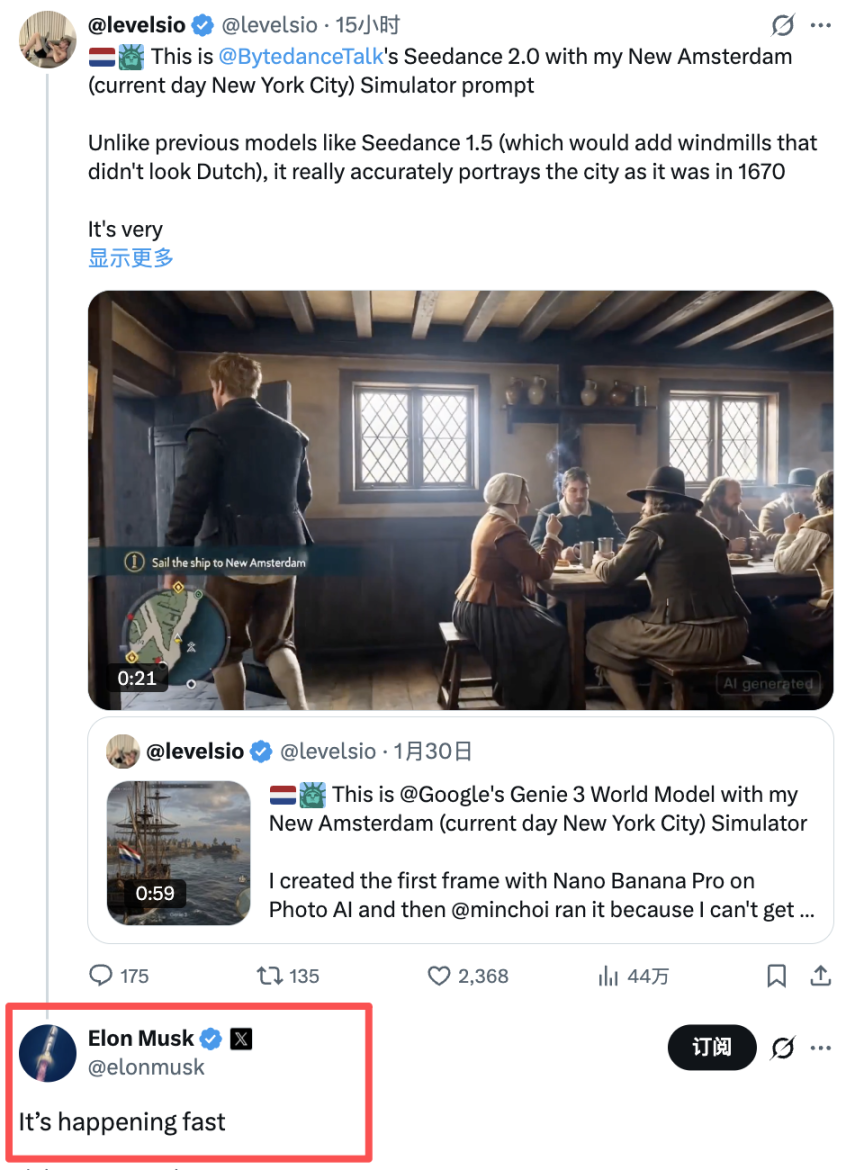

Generative video models are accelerating into mainstream products and enterprise toolchains. After releasing Seedance 2.0, ByteDance quickly gained popularity overseas. Musk’s retweet on X with the comment “It’s happening fast” further amplified market attention on the leap in video generation capabilities.

Latest updates come from social platforms. Musk commented on Seedance 2.0-related posts on X, lamenting the rapid development speed, which continues to boost discussion about this model overseas. Public concern over its controllability and production capacity has also increased.

ByteDance today signaled clear productization intentions. Seedance 2.0 has officially launched, fully integrated with Doubao and Jiumeng, and is now available at the Volcano Ark Experience Center for user trials. The model emphasizes synchronized audio-visual output, multi-camera long narrative, and controllable multimodal generation, targeting a broader range of creators and commercial content scenarios.

However, the company remains cautious in its statements. ByteDance’s official Weibo states that Seedance 2.0 “is still far from perfect,” with many flaws in generated results. Future efforts will focus on deep alignment between large models and human feedback. For market participants, this combination of “high exposure + rapid productization + continuous iteration” reinforces expectations of accelerated competition in the video generation track.

Elon Musk retweets, boosting international attention

After internal testing, Seedance 2.0’s multimodal creation approach and “self-contained camera movements” have sparked widespread interest globally. Musk’s retweet and comment “It’s happening fast” on X have expanded the model’s reach from tech circles to broader tech investment and product communities.

Although Musk’s public remarks did not delve into technical details, they reinforced the narrative of rapid development. This signal helps increase external focus on ByteDance’s multimodal capabilities and may marginally influence valuation expectations across related industry chains.

From internal testing to full integration: Doubao, Jiumeng, and Volcano Ark advancing simultaneously

ByteDance disclosed today that Doubao’s video generation model Seedance 2.0 has officially integrated into Doubao app, desktop, and web versions, and is fully connected with Doubao and Jiumeng products. It is also now available at the Volcano Ark Experience Center for user trials.

For enterprise users, ByteDance plans to launch Seedance 2.0 API services at Volcano Ark by mid to late February to better support creative applications. This indicates that Seedance 2.0 is not only a creative tool but also preparing for more standardized B2B deployment.

Multimodal, long narrative, and synchronized audio-visuals targeting “professional production scenarios”

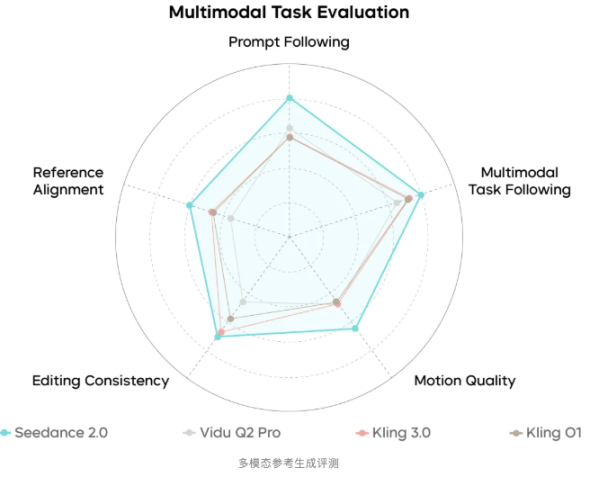

ByteDance emphasizes that Seedance 2.0 aims to meet “professional production scene” standards in quality and controllability. Key features include:

- Multimodal input supporting text, images, audio, and video, referencing composition, actions, camera movements, effects, and sound elements.

- Synchronized original audio-visual output with multi-track support, including background music, environmental sounds, or voice-over, aligned with visual rhythm.

- Multi-camera long narrative with “directorial thinking,” capable of automatically parsing narrative logic, generating shot sequences, and maintaining consistency in characters, lighting, style, and atmosphere.

- New video editing and extension capabilities, enhancing “director-level control” workflows.

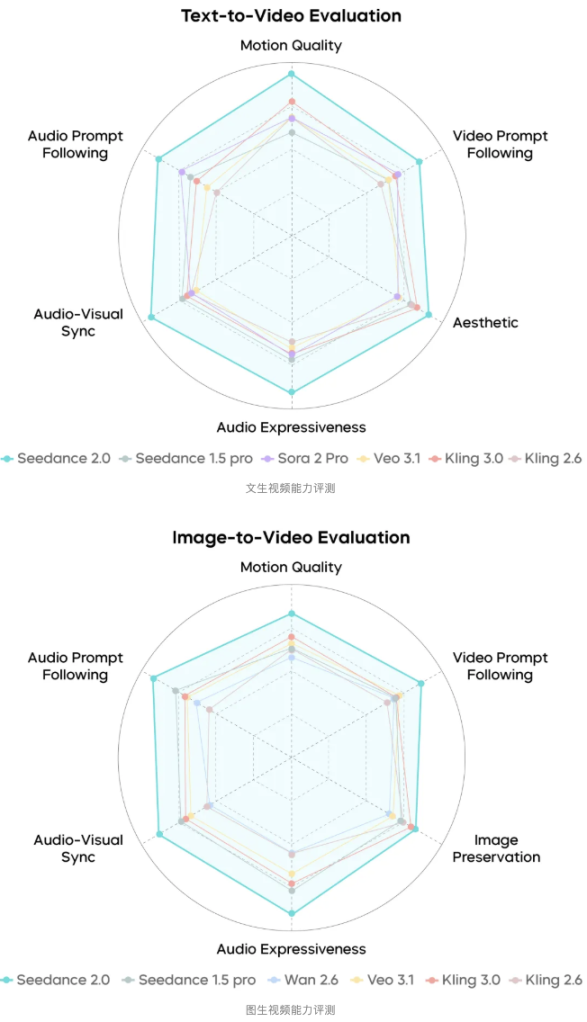

ByteDance states that Seedance 2.0 has effectively addressed challenges like physical law adherence and long-term consistency, with motion scene generation reaching industry SOTA levels.

“Still far from perfect”: clear limitations and shortcomings noted in product description

ByteDance notes that Seedance 2.0’s overall performance is industry-leading but still has room for optimization, including detail stability, multi-character matching, multi-subject consistency, text fidelity, and complex editing effects. The company will continue exploring deep alignment between large models and human feedback.

Compliance and usage boundaries are now clearer. ByteDance states that Seedance 2.0 currently restricts using real human images or videos as primary references. If real human references are needed, verification or authorization from the individual is required. These restrictions will directly impact some commercial material production and deployment workflows.

February 14 release imminent, upgrade pace becomes a new variable

ByteDance’s Volcano Engine has confirmed that on February 14, 2026, it will release a series of major upgrades for Doubao, including Doubao Large Model 2.0, Seedance 2.0 for audio-visual creation, and Seedream 5.0 Preview for image creation. The company states that foundational model capabilities and enterprise-level agent functions will see significant improvements.

Amid Musk’s external lament that “development speed is too fast,” market focus will shift to two key points: first, whether Seedance 2.0’s API launch and enterprise adoption pace match product narratives; second, whether improvements in consistency, lip-sync, and complex editing can support its transition from “viral demo” to “stable productivity.”